The ODAC Caching Engine That Thinks

Caching is usually a "set it and forget it" gamble or a "tweak until it breaks" nightmare. Most developers treat their cache like a dumb bucket: you throw data in, set a Time-To-Live (TTL), and hope you don't run out of RAM. But servers aren't static buckets, and your application's traffic certainly isn't a steady stream.

With ODAC v1.8.0, we decided to stop gambling. We built a native, Go-based Adaptive Caching Engine that doesn't just store data, it understands the environment it lives in. It monitors your server's physical memory in real-time and makes executive decisions about what deserves to stay and what needs to go.

The Ghost in the Machine: Adaptive Memory Awareness

The core "Why" behind this upgrade was architectural resilience. Traditional caching layers are often blind to the host's health. If your application suddenly spikes in memory usage, a "dumb" cache will keep hogging its pre-allocated slice until the OOM (Out Of Memory) killer knocks on your door.

ODAC's new engine is different. It implements a "Memory Floor" strategy. By monitoring system RAM every few seconds, the cache calculates a dynamic ceiling. If your server is a 64GB powerhouse, the cache expands to offer multi-gigabyte performance. If you are running on a 512MB tiny VPS, it shrinks its footprint to ensure your mission-critical processes have breathing room.

When memory pressure hits a critical threshold (30% free RAM), the engine switches to "High-Impact Mode." It stops admitting new low-frequency assets and aggressively evicts anything that isn't actively "hot." If memory drops below 20%, it disables itself entirely to save the server. This is zero-config infrastructure that actually protects you.

Beyond TTL: The Two-Hit Stability Rule

Static page caching is dangerous. If you cache a page that contains a unique CSRF token or a "Welcome, [Name]" header, you've just leaked private data. Most platforms solve this with complex configuration files. We solved it with math.

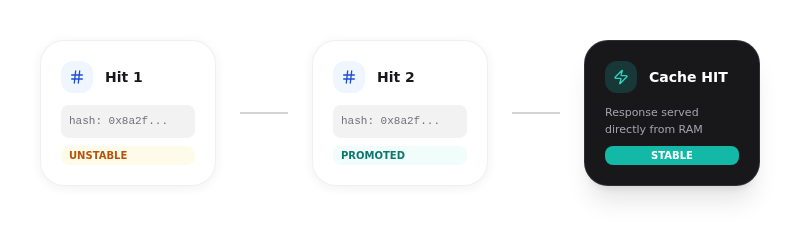

The ODAC Page Cache uses a "Stability Detection" mechanism powered by FNV-1a hashing. When you opt-in to caching for a specific route, the proxy doesn't immediately store the result. Instead, it hashes the response body and waits.

Only after the proxy sees two consecutive, identical responses for the same URL does it promote that page to the "Stable" cache. This "Two-Hit Rule" ensures that dynamic pages with per-request variance are never accidentally served to the wrong user. It is a safety-first approach that requires zero manual regex rules.

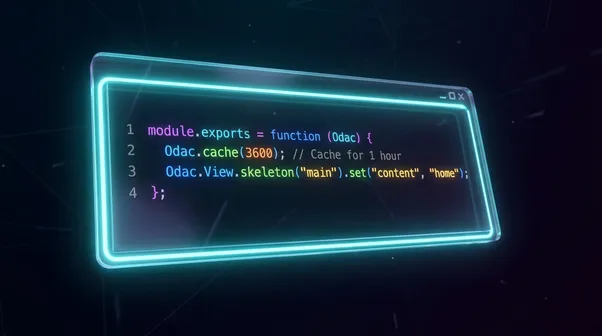

To opt-in, you simply send a header from your application:

X-Odac-Cache: 3600

Vary: Accept, X-Odac, X-Requested-With

You can manage this instantly from the app.odac.run dashboard by adjusting your app settings, or if you prefer the terminal, your application just needs to emit the X-Odac-Cache header. The ODAC Proxy handles the rest, including background revalidation so your users never wait on a backend fetch.

103 Early Hints: The Zero-Latency Head Start

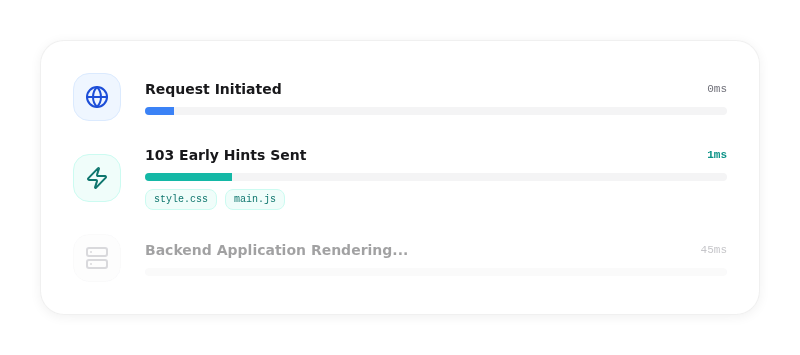

The fastest request is the one the browser starts before you even send the main response. ODAC v1.8.0 now supports automated HTTP 103 Early Hints. While your backend is still processing a heavy database query, the ODAC Proxy can already tell the browser which CSS and JS files it will need.

Our engine "learns" these hints by watching your Link: rel=preload headers. Once it identifies stable assets, it caches the hint itself. The next time a user requests that page, the proxy sends the 103 Early Hints response instantly, often within sub-milliseconds, before even talking to your application.

By the time your app finishes rendering the HTML, the browser has already finished downloading the render-blocking CSS. It is a massive win for Largest Contentful Paint (LCP) and overall perceived performance.

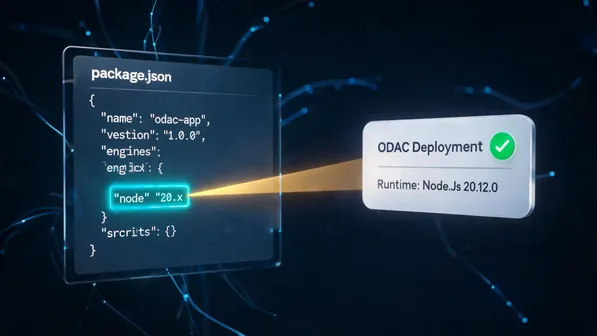

Getting Started with v1.8.0

Deploying an application with these features is as simple as ever. You can create your app with one click at app.odac.run, or use the CLI:

odac app create https://github.com/youruser/your-repo.git --name my-performance-app

The Adaptive Caching Engine is enabled by default. It is part of our commitment to building a self-hosted platform that performs like a billion-dollar cloud provider without the billion-dollar complexity. No external Redis, no complex Varnish VCL files, just pure, native Go performance running directly on your hardware.